One-variable real-valued function fitting with PyTorch

This post is part of a series of posts on the fitting of mathematical objects (functions, curves and surfaces) through a MLP (Multi-Layer Perceptron) neural network;

for an introduction on the subject please see the post Fitting with highly configurable multi layer perceptrons.

The topic of this post is the fitting of a continuous and limited real-valued function defined in a closed interval of the reals $$f(x) \colon [a,b] \to \rm I\!R$$ with a MLP

so that the user can test different combinations of MLP architectures, their own activation functions, training algorithm and loss function without writing code

but working only on the command line of the four Python scripts which separately implement the following features:

- Dataset generation

- MLP architecture definition + Training

- Prediction

- Visualization of the result

To get the code please see the paragraph Download of the complete code at the end of this post.

The exact same mechanism was created using TensorFlow (with Keras) technology; see the post One-variable real-valued function fitting with TensorFlow always published on this website.

If you are interested in regression of a real function of a variable with machine learning techniques see the posts Fitting functions with a configurable XGBoost regressor, Polynomial regression with Accord.NET and SMO regression for SVM with PUK kernel in Weka.

Dataset generation

Goal of the fx_gen.py Python program

is to generate datasets (both training and test ones) to be used in later phases;

it takes in command line the function to be approximated (in lambda body syntax), the interval of independent variable (begin, end and discretization step)

and it generates the dataset in an output csv file applying the function to the passed interval.

In fact the output csv file has two columns (without header): first column contains the sorted values of independent variable $x$ within the passed interval discretized by discretization step;

second column contains the values of dependent variable, ie the values of function $f(x)$ correspondent to values of $x$ of first column.

To get the program usage you can run this following command:

$ python fx_gen.py --helpusage: fx_gen.py [-h]

-h, --help show this help message and exit

--dsout DS_OUTPUT_FILENAME dataset output file (csv format)

--fx FUNC_X_BODY f(x) body (body lamba format)

--rbegin RANGE_BEGIN begin range (default:-5.0)

--rend RANGE_END end range (default:+5.0)

--rstep RANGE_STEP step range (default: 0.01)An example of using the program fx_gen.py

Suppose you want to approximate the function $$f(x)=\sqrt{|x|}$$ in the range $[-5.0,5.0]$ Keeping in mind that np is the alias of NumPy library, the translation of this function in lambda body Python syntax is:

np.sqrt(np.abs(x))$ python fx_gen.py \

--dsout mytrain.csv \

--fx "np.sqrt(np.abs(x))" \

--rbegin -5.0 \

--rend 5.0 \

--rstep 0.01$ python fx_gen.py \

--dsout mytest.csv \

--fx "np.sqrt(np.abs(x))" \

--rbegin -5.0 \

--rend 5.0 \

--rstep 0.0475MLP architecture definition + Training

Goal of the fx_fit.py Python program

is to dynamically create a MLP and perform its training according to the passed parameters through the command line.

To get the program usage you can run this following command:

$ python fx_fit.py --helpusage: fx_fit.py [-h]

--trainds TRAIN_DATASET_FILENAME

--modelout MODEL_PATH

[--epochs EPOCHS]

[--batch_size BATCH_SIZE]

[--hlayers HIDDEN_LAYERS_LAYOUT [HIDDEN_LAYERS_LAYOUT ...]]

[--hactivations ACTIVATION_FUNCTIONS [ACTIVATION_FUNCTIONS ...]]

[--optimizer OPTIMIZER]

[--loss LOSS]

[--device DEVICE]An example of using the program fx_fit.py

Suppose you have a training dataset available (for example generated through fx_gen.py program as shown in the previous paragraph)

and you want the MLP to have three hidden layers with respectively with 200, 300 e 200 neuroni neurons and that you want to use the following output activation functions respectively: Tanh, ReLU and Tanh again;

moreover you want to perform 500 training epochs with a 100 items batch size using the RMSprop with learning rate equal to 0.01

and loss function equal to SmoothL1Loss. To put all this into action, run the following command:

$ python fx_fit.py \

--trainds mytrain.csv \

--modelout mymodel.pth \

--hlayers 200 300 200 \

--hactivations 'Tanh()' 'ReLU()' 'Tanh()' \

--loss 'SmoothL1Loss()' \

--optimizer 'RMSprop(lr=0.01)' \

--epochs 500 \

--batch_size 100mymodel.pth will contain the MLP model trained on mytrain.csv dataset according to the parameters passed on the command line.

Prediction

Goal of the fx_predict.py Python program

is to apply the MLP model generated through fx_fit.py to an input dataset (for example the test dataset generated through fx_gen.py program as shown in a previous paragraph);

the execution of the program produces in output a csv file with two columns (without header): the first column contains the values of indepedent variable $x$ taken from test dataset

and the second column contains the predicted values of dependent variable, ie the values of the prediction correspondent to values of $x$ of first column.

To get the program usage you can run this following command:

$ python fx_predict.py --helpusage: fx_predict.py [-h]

--model MODEL_PATH

--ds DATASET_FILENAME

--predictionout PREDICTION_DATA_FILENAME

[--device DEVICE]An example of using the program fx_predict.py

Suppose you have the test dataset mytest.csv available (for example generated through fx_gen.py program as shown in a previous paragraph)

and the trained model of MLP in the file mymodel.pth (generated through fx_fit.py program as shown in the example of previous paragraph); run the following command:

$ python fx_predict.py \

--model mymodel.pth \

--ds mytest.csv \

--predictionout myprediction.csv

myprediction.csv will contain the fitting of the initial function.

Visualization of the result

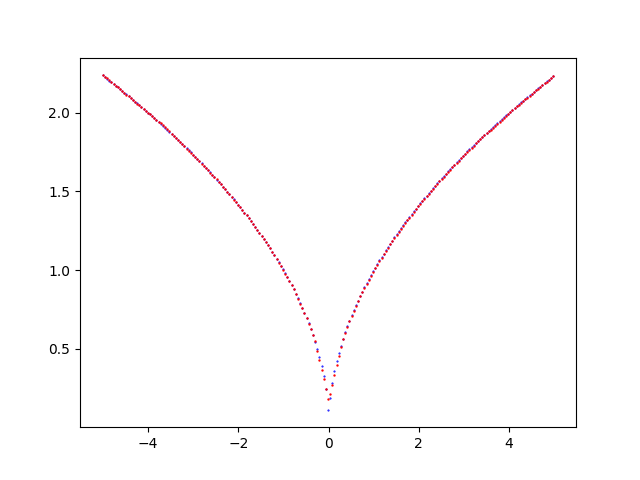

Goal of the fx_plot.py Python program

is to visualize the prediction curve superimposed on initial dataset curve (test or training, as you prefer) and it allows the visual comparison of the two curves.

To get the program usage you can run this following command:

$ python fx_plot.py --helpusage: fx_plot.py [-h]

--ds DATASET_FILENAME

--prediction PREDICTION_DATA_FILENAME

[--savefig SAVE_FIGURE_FILENAME]An example of using the program fx_plot.py

Having the test dataset mytest.csv available (for example generated through fx_gen.py program as shown in a previous paragraph)

and the prediction csv file (generated through fx_predict.py program as shown in the previous paragraph), to generate the two xy-scatter charts, execute the following command:

$ python fx_plot.py \

--ds mytest.csv \

--prediction myprediction.csvNote: Given the stochastic nature of the training phase, your specific results may vary. Consider running the example a few times.

fx_plot.py that shows the fitting of the function $f(x)=\sqrt{|x|}$ made by the MLP.Examples of cascade use of the four programs

In the folder one-variable-function-fitting/examples

there are nine shell scripts that show the use in cascade of the four programs in various combinations of parameters

(MLP architecture, activation functions, optimization algorithm, loss function, training procedure parameters)

To run the nine examples, run the following commands:

$ cd one-variable-function-fitting/examples

$ sh example1.sh

$ sh example2.sh

$ sh example3.sh

$ sh example4.sh

$ sh example5.sh

$ sh example6.sh

$ sh example7.sh

$ sh example8.sh

$ sh example9.shDownload of the complete code

The complete code is available at GitHub.

These materials are distributed under MIT license; feel free to use, share, fork and adapt these materials as you see fit.

Also please feel free to submit pull-requests and bug-reports to this GitHub repository or contact me on my social media channels available on the top right corner of this page.